Machine learning for automated close-range photogrammetry

Start: October 2018

Student: Joe Eastwood

Supervisors: Samanta Piano, Richard Leach

Funding: CDT in Ultra Precision Engineering

Close-range photogrammetry is an optical form measurement technique which relies on detecting and triangulating feature correspondances between a set of photographic images to create a 3D point cloud. Photogrammetry is an attractive form measurement technique due to the relative low cost of the components required when compared to competing technologies, such as fringe projection. The current measurement pipeline is, however, slow and dependant on user input.

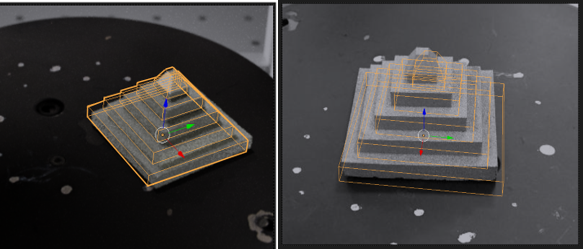

This project aims to move toward a fully autonomous and optimised photogrammetric pipeline without the need of specialised per-part fixturing or on-part fiducial markers. Early work has focussed on initial location of the part within the measurement volume from a single image via a residual neural network (Figure 1). A photo-realistic simulated version of the measurement instrument was developed in order to autonomously generate training data from the CAD data of a part. Once the part is located within the volume, the camera is moved into a set of optmised imaging locations which are found using a genetic algorithm. Future work will refine this procedure, then move to investigating automation of the charaterisation of the camera’s intrinsic parameters.

Figure 1 Example six degree of freedom pose predictions on two input images (prediction reprojection overlaid in yellow). a) On a synthetic image, b) on a real photographic image.