Advanced robotics

The Nottingham Advanced Robotics Laboratory, NARLy, is a part of the Advanced Manufacturing Research Group tasked with investigating the modelling and control of complex, often non-linear, electromechanical systems, with a particular focus on applications that involve intelligent robotic systems working in industry or healthcare.

Because such systems are used across a number of areas, our lab often collaborates with other groups/labs within the Faculty of Engineering and Advanced Manufacturing group.

Current research topics include:

- Modelling and control of non-linear multi-body systems

- Machine learning and machine vision

- Human-robot collaborative learning

- Digital manufacturing and Industry 4.0

Team members

Academic Staff

Projects

Member: Mojtaba Ahmadieh Khanesar

Supervisors: David T. Branson III

Funding: EPSRC

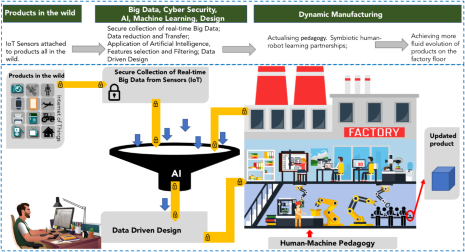

The Chatty Factories project is a three-year project funded by the Engineering and Physical Sciences Research Council (EPSRC) through its programme for New Industrial Systems. This project explores the transformative potential of placing data driven systems at the core of manufacturing processes, with the aim of increasing competitive advantage by offering greater opportunities to innovate and reducing time to market. Specifically the research explores opportunities to collect data from sensors embedded in products in real-time; examine how that data might be transferred into usable information to optimise and produce innovative designs; and then quickly and efficiently transfer these designs to factory production.

The University of Nottingham is responsible for work-package 6/6 focusing on human-robot collaborative manufacture to provide a deep understanding of the way an expert worker performs with, and communicates tasks to/from, robotic collaborators. Enhanced with advanced machine learning techniques, digital twin technology and informed by expert human advice, industrial production will move beyond staged manual refinement to dynamically adapt to product and production variances.

Results will enable manufacturing ecosystems that can continuously reskill and reorient the human and robot production elements to improve efficiency, robustness and compliance within any regulatory structure.

More information about this project can be found on https://www.chattyfactories.org/.

Optimised Production System for Omnigen

Member: Wahyudin P. Syam

Supervisors: David T. Branson III, Katy Voisey

Funding: Innovate UK, Nuvision Biotheraphy Ltd.

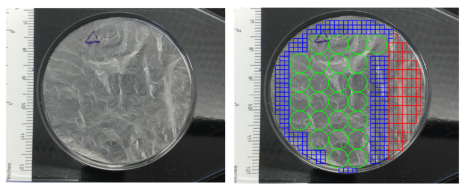

Omnigen is the first dry preserved amnion-derived product developed as a directly accessible biological matrix that can be reconstituted in situ without any biological loss. It is currently used as an effective medical technology for a variety of non-healing conditions, including diabetic foot ulcers (DFU) and ocular surface disease (OSD). The general objective of this project is to develop and build a more efficient and robust manufacturing cell to increase it’s production.

Specifically, our work packages are focusing on the optimisation and automation of the cutting and quality assessment steps utilizing by intelligent machine systems. The goals of this project are:

- Realising automatic and optimised amnion pattern cutting selection over a large raw material surface. With this optimised cutting selection, wasted amnion materials will be significantly reduced.

- Realising an intelligent machine vision system to automatically assess the quality for each cutto the same quality and consistency level as human-based assessments. In this way more objective and consistent amnion quality assessment will be obtained faster.

- Developing an innovative amnion material fixturing and handling system

Enabling Technologies for Actuated Continuum Surfaces Undergoing Large deformations

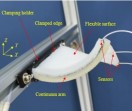

Actuated surfaces capable of large, continuous deformations are experiencing a high level or interest in applications areas such as robotics, healthcare, automotive, aerospace, and manufacturing. However, their design, modelling and control have thus far have been based on ‘trial and error’ methods requiring high levels of user specialization and resulted in surfaces achieving a very small deflection relative to their size. To realize actuated surfaces that can deform into high curvature shapes, validated models based on physical and mechanical properties including elasticity, material characteristics, gravity, external forces and thickness shear effects will need to be integrated with novel design and production capabilities.

Towards this, the EPSRC funded project (EP/N022505/1) first developed, and validated a lumped-mass based model for surfaces undergoing actuated large deformations to form continuous surface profiles. The resulting model included dynamic properties for both the surface and actuation elements that enabled accurate deformation predictions due to multiple actuation configurations, and internal/external loading. The model was further validated against an experimental “soft” robotic surface configuration, and measured results showed an average error of less than 1% of the length of the surface’s side both during movement and at final deformed shapes.

The next phase of this work is to link this model to surface design and production methods across a number of application areas, and link this to advanced control methods to ensure desired deformations under loading. This will be achieved through two main pathways:

Pathway 1) Considers the construction of a continuous surface, complete with integrated sensors and actuation elements through Additive Manufacturing techniques. Outcomes from this work will result in a singular actuated surface that can be printed on demand and in a scalable manner. Currently, due to the low TRL status of the construction methods employed, these surfaces will initially be smaller, and geared more towards the “soft” robotics side to maximize deformation capabilities. However, they will contain embedded stiffening technologies to ensure disturbance rejection when holding shape.

Pathway 2) Investigates the development of larger actuated surfaces with greater force and displacement capabilities than currently demonstrated. This system will integrate actuation, sensor and material technologies in a much larger scale surface. As with Pathway 1), the resulting surface will contain embedded stiffening technologies, but will be capable of holding position under much higher loading. It is anticipated that results from this work will be applicable within the aerospace, automotive, chemical, food and other fluid based industries.

It is through this work that we will advance the use of actuated surfaces into the next realm by providing the tools necessary to enable robust procedures for their design, construction and control. This will not only make direct, meaningful contributions to their use in areas such as exoskeleton systems in healthcare. But have further implications in industries requiring large scale surface control such as aerospace, automotive, energy and food processing.

Industrial Co-bots Understanding Behaviour (I-CUBE)

Investigators:

Michel Valstar, School of Computer Science

David Branson, Faculty of Engineering

Sue Cobb, Faculty of Engineering

Sarah Sharples, Faculty of Engineering

Mercedes Torres Torres, Horizon

Ke Zhou, School of Computer Science

Collaborative robots, or co-bots, are robots that work collaboratively with humans in a productivity-enhancing process, most often associated with manufacturing and/or healthcare domains. Despite the aim to collaborate, co-bots lack the ability to sense humans and their behaviour appropriately. Instead robots rely on physically mechanical, hierarchical, instructions given explicitly by the human instead of utilizing a more natural means to include pose, expression, and language, and utilize this to determine behaviour. In turn, humans do not understand how the robot makes its decisions. Co-bots also do not utilise human behaviour to learn tasks implicitly, and advances in online and reinforcement learning are needed to enable this. We argue that these problems can be addressed if we endow industrial co-bots with the ability to sense and interpret the actions, language, and expressions of the person with whom they collaborate.

Towards this end I-CUBE seeks to bring together the know-how and research interests of the human factors and machine learning expertise in the Faculty of Engineering with the automatic human behaviour analysis in computer science (including natural language processing) to build a human-robot collaborative platform that can sense humans and extend communication with language and behaviour understanding through improved reinforcement learning capabilities.

Fabric-based sensor systems

Member: Cristina Isaia

Supervisors: Donal McNally, David T. Branson III

Funding: EPSRC, Innovate UK and Footfalls & Heartbeats

Over the past decades, wearable sensors have gained the interest of researchers and clinicians thanks to their advantages. Compared with standard measurement systems based on image-based techniques such as the APAS or VICON systems) that requires the user to be in a specially outfitted room, wearable systems comprising multiple inertial motion sensors (consisting of accelerometers, gyroscopes, and polymer based fabrics) fixed on lower limb segments enable capturing the kinematics of lower limbs such as joints angles and limb orientation during ambulation. These systems are: lightweight, not bulky, flexible, fitted closely to the human body, can be used within various application areas (e.g. health, military, domestic) and capable of recording motion outside. While a number of sensor systems are being utilized as “wearable” technology they are actually composed of multiple sensor systems attached externally to the clothing being worn.

Despite their advantages, current wearable sensors suffer from several drawbacks depending on the type used. They must be placed correctly and securely, account for gravity, noise and signal drift, and multiple components must be removed for cleaning of the garment. In some cases they must also be placed on the skin of the patient, do not record whole body movements (e.g. smart watches and/or phones) or have not properly considered user preferences thus affecting uptake of systems. All of these factors will influence the subject’s behaviour during measurements of interest, further decreasing effectiveness.

Combining stainless steel with polyester fibres adds an attractive conductive behaviour to the yarn. Once knitted in such a manner, fabrics develop sensing properties that make the textiles, also known as e-textiles, suitable for smart/wearable applications. The advantages to using fabric are that they are more resilient, stronger and can be used across a wider range of temperatures than polymer based sensors currently considered for similar applications. The work pursued to date has concerned the investigation of the electrical properties exhibited by knitted conductive fabrics, made of a combination of stainless steel and polyester fibres (© Footfalls & Heartbeats (UK) Limited). The aim is to study the usability of electrically conductive textiles as sensors to be embedded into garments for human motion capture. Further uses for fabric based sensors include determining surface force and displacement profiles associated with research on large deformation surfaces. This work is being undertaken in collaboration with Footfalls & Heartbeats and the Bioengineering research group.

Open-Source Assistive Devices (OPAD)

UoN OPAD is a student-led group of University of Nottingham students, academics, technicians and researchers who volunteer their time to design and manufacture bespoke assistive devices for individuals with physical impairments. Our student led groups work directly with their clients to provide design solutions which aim to improve their quality of life.

We are always looking to recruit new members from the University of Nottingham regardless of your year, experience or field of study. If you are keen to join us or would like to find out a bit more about what we do, feel free to pop along to one of our weekly sessions. Alternatively, you can contact us through OPAD@nottingham.ac.uk and we'll advise you of what to do next.

For those interested, OPAD is also a module under the Nottingham Advantage Award.

To enrol please use the following link: https://workspace.nottingham.ac.uk/display/NAAMY/Open-source+Prosthetics+and+Assistive+Devices+%28OPAD%29+XN1143N

Undergraduate Student Projects

In addition to the research projects found within the Nottingham Advanced Robotics Laboratory, we also supervise undergraduate final year, 3rd year group design and make, summer projects, and Erasmus placements on a number of other topics including:

- The Freefall Camera - A group of student skydiving enthusiasts desired to design, construct a skydiving robot to take video capture during freefall. They then went on to do final year projects on individual parts of the system and are now working to make the results a sellable product.

- Machine learning - These cover a number of applications looking at robotic systems learning on their own, or through human based reinforcement learning, how to achieve tasks.

- Manipulation of deformable objects

- Continuum surface actuation and motion

- Automated computer vision

If you have an interest in these or other topics Dr Branson will be happy to consider them as resources and time allow. If you are a University of Nottingham M3 undergraduate and/or overseas Erasmus candidate, please contact him in person to discuss.